The AI landscape in 2026 has shifted from simple text generation to a high-stakes Digital Labor Market where autonomous agents are the new primary workforce. If you are a developer in Silicon Valley or a business leader in Austin, you’ve likely noticed that the focus is no longer just on GPT-5; instead, the industry is obsessed with Agentic AI Platforms that can execute complex workflows without human intervention.

Monitoring LLM News Updates represents a massive opportunity for you to leverage an Enterprise AI Operating System that doesn’t just suggest solutions but actually implements them across your entire business stack. Staying ahead with LLM News Updates ensures you remain competitive in an increasingly automated global economy. With the recent launch of GPT-5.4 Pro and Claude 4.6, the barrier between human-led strategy and AI-led execution has virtually vanished. This shift is a structural rebuilding of how digital work is performed. In early 2026, we saw the introduction of models that no longer require step-by-step prompting but instead operate on high-level intent, using “Reasoning Enclaves” to verify logic before taking action. For any professional, this means moving from being a “writer” to becoming an “AI Orchestrator” who manages a fleet of specialized digital workers. Mastering LLM News Updates is now the primary differentiator between stagnant businesses and those achieving exponential growth through AI Tools and AI Agents.

Why Are LLM News Updates Shifting Toward Agentic Workflows?

The manual data bottlenecks that once slowed down your operations are finally meeting their match in 2026. While previous years were about “chatting” with AI, the current trend focuses on “doing,” as AI Infrastructure now supports models that can browse the web, write code, and manage databases simultaneously.

High-income professionals and decision-makers are moving away from general-purpose bots toward specialized AI-Powered Software that acts as a proactive member of the team. Because LLM News Updates change daily, you must track these shifts to identify which AI Tools and AI Agents actually deliver a return on investment. This shift is fueling a massive increase in global AI spending, projected to hit record highs this year. Gartner’s recent 2026 forecast suggests that nearly 40% of enterprise applications will feature task-specific agents by the end of the year. This transition is driven by the “Agentic Process Fabric,” allowing an AI in marketing to talk directly to an AI in finance to reconcile budgets in real-time. We are seeing a move away from the “Trough of Disillusionment” as companies stop looking for a single “God-model” and start building multi-agent systems for niches like automated SEO or autonomous cybersecurity. The value is no longer in the chat window; it is in the API-driven autonomy that allows your business to scale without a linear increase in headcount. Every new LLM News Updates alert brings us closer to a fully autonomous Digital Labor Market where human input is reserved for high-level creative vision.

What Is the Core Framework Behind Today’s LLM News Updates?

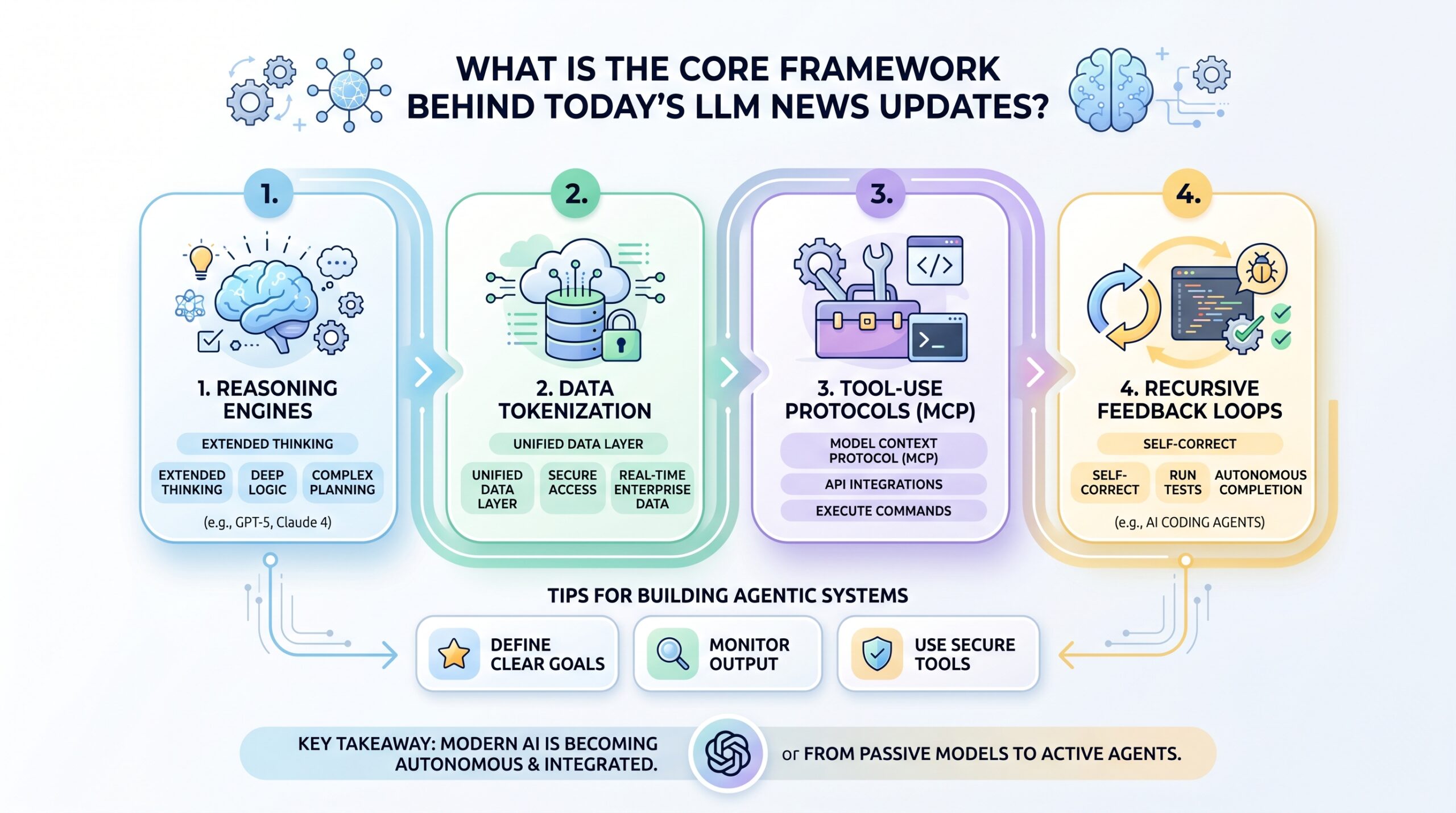

To understand the latest LLM News Updates, you must look at the architectural shift from “monolithic models” to “agentic stacks.” These new systems aren’t just larger versions of GPT-4; they are built with specific layers designed for autonomy, tool-use, and long-term memory.

Whether you are using Open Source AI or proprietary giants, the underlying mechanics generally follow a specialized four-tier structure. Analyzing LLM News Updates reveals that modularity is now more important than raw parameter count for most enterprise applications. The modern “Agentic Stack” begins with a Reasoning Engine, such as the new GPT-5.4 Thinking tier, which uses chain-of-thought processing to simulate human deliberation. Below this lies the Data Tokenization Layer, which ensures the AI can “see” your private company data without compromising security. The third layer is the Tool-Use Protocol, often utilizing the Model Context Protocol (MCP), providing the “hands” for the AI to click buttons and manage software. Finally, Recursive Feedback Loops act as a quality control mechanism, allowing the agent to test its own code or double-check its facts against a verified knowledge base before presenting a result. This four-tier architecture is the reason why 2026 models are significantly more reliable, as they catch their own errors in a “sandbox” environment. If you fail to keep up with LLM News Updates, you might overlook critical refinements to these layers that could make your current AI Assistant obsolete within weeks.

- Reasoning Engines: Models like GPT-5.4 and Claude 4.6 Opus now feature “Extended Thinking” modes for deep logic.

- Data Tokenization: A unified layer that allows an AI Assistant to securely access real-time enterprise data.

- Tool-Use Protocols (MCP): The Model Context Protocol (MCP) allows agents to use APIs and terminal commands.

- Recursive Feedback Loops: These allow AI Coding Agents to run tests and self-correct until a task is completed.

llm news updates

How Do You Choose Between Open Source AI and Proprietary Models?

The gap between paid models and Open Source AI has virtually disappeared in 2026. Models like DeepSeek-V3.2 and Qwen 3.5 now frequently outperform their proprietary rivals on LLM Leaderboards for specific tasks like coding and math.

For a business owner or developer, the choice now comes down to your specific needs for privacy, cost, and “doctorate-level” reasoning capabilities. Recent LLM News Updates suggest that smaller, fine-tuned models are often more reliable than massive general-purpose ones for niche technical tasks. While proprietary models like Gemini 3.1 Pro offer best-in-class integration, open-weight releases like GPT-OSS-120B allow you to run powerful AI entirely on your own AI Infrastructure. The economics of AI have also flipped; open-source models now average 80% lower costs per million tokens compared to proprietary APIs, making them preferred for high-volume tasks like data scraping. However, proprietary labs still maintain a slight edge in “Zero-Shot Reasoning”—the ability to solve a completely new problem without prior examples. If you are building a specialized internal tool that requires 100% data sovereignty, the 2026 wave of open-source models like Llama 4 Scout (which features a 10-million token context window) provides a level of freedom that was unthinkable just a year ago. You must constantly monitor LLM News Updates to determine if a proprietary model has regained the lead in efficiency or if Open Source AI remains the more cost-effective choice for your specific scale.

| Feature | Proprietary (e.g., GPT-5.4, Gemini 3.1) | Open Source (e.g., DeepSeek, Llama 4) |

| Best Use Case | Enterprise-Wide Automation & Multimodal | Coding, Specialized RAG, & Local Hosting |

| Data Privacy | Subject to Provider Terms | 100% Sovereign (Your Infra) |

| Context Window | Up to 1M+ Tokens | 1M (DeepSeek) to 10M (Llama 4 Scout) |

| Cost Structure | Usage-Based API Tokens | GPU/Compute Costs or Subscription |

What Is the Implementation Roadmap for Integrating These LLM News Updates?

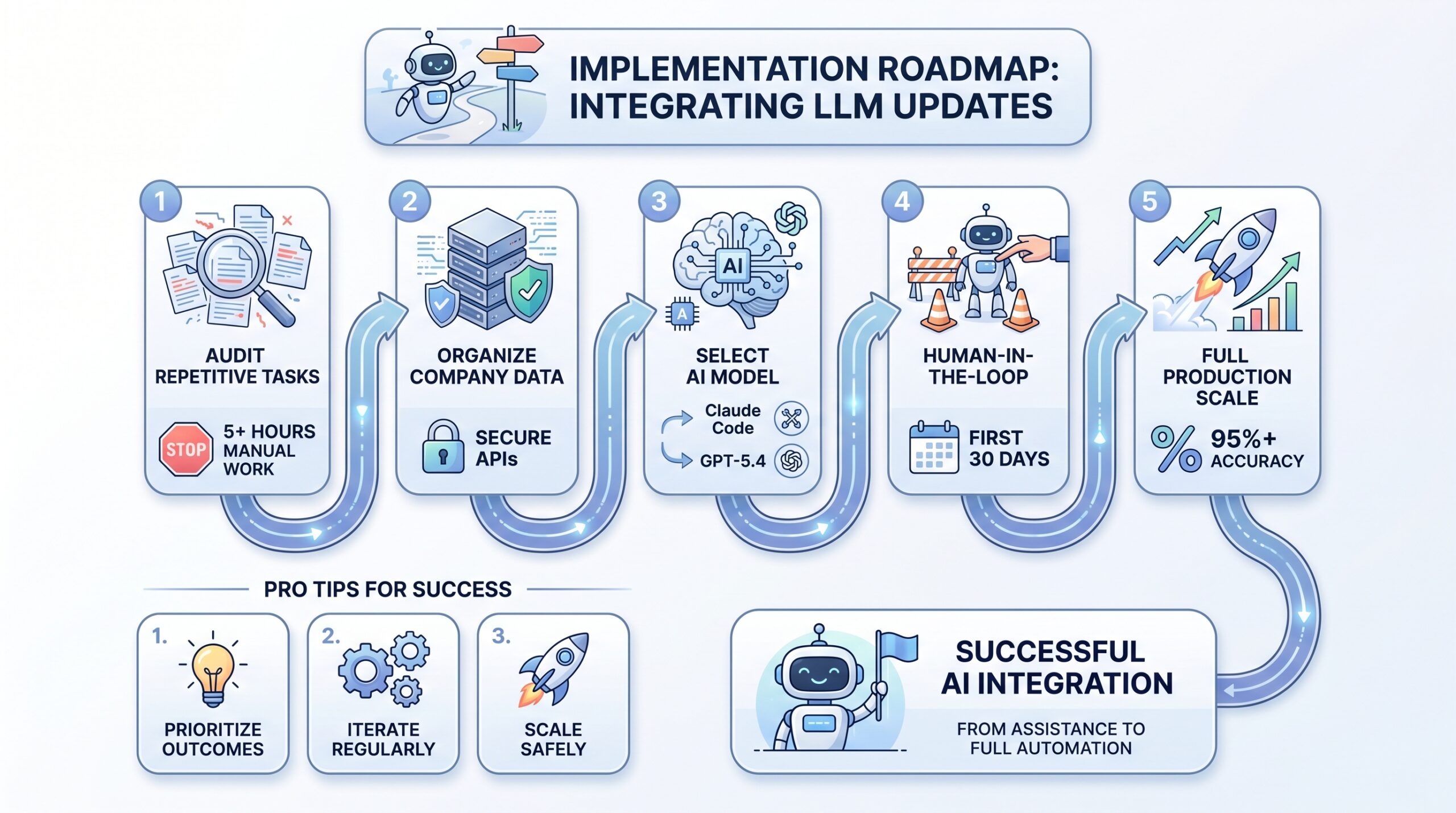

Starting your journey into the world of AI Tools and AI Agents requires a structured approach to ensure you don’t just add “noise” to your workflow. You need a roadmap that prioritizes outcomes over hype, moving from simple assistance to full-scale automation.

Following LLM News Updates helps you identify the exact moment a tool becomes “Production-Ready” for your specific industry. To begin, audit your current digital workflows and identify “High-Friction Tasks”—those that take more than five hours a week and follow a predictable pattern. Once identified, map your “Data Fabric” to ensure your agents have a clean source of truth to draw from. Selecting the right “Brain” is the next critical step; for example, you might choose Claude Code for your engineering team while utilizing GPT-5.4 for your executive operations. Implementation should always begin in a “Pilot Phase” with strict “Human-in-the-Loop” guardrails to monitor for hallucinations. As your confidence in the system grows, you can move toward “Agentic Orchestration,” where your role shifts from doing the work to auditing the output. This strategic transition ensures that your company remains agile and capable of absorbing the next wave of AI Model Releases without disrupting core operations. By keeping a close eye on LLM News Updates, you can adjust your roadmap as new AI Assistant capabilities or AI Coding Agents hit the market.

- Identify Bottlenecks: Audit tasks that take 5+ hours of manual, repetitive digital labor.

- Audit Your Data Fabric: Ensure company data is organized and accessible via secure APIs.

- Choose Your “Brain”: Select a model from the LLM Leaderboards—use Claude Code for dev or GPT-5.4 for operations.

- Establish Guardrails: Implement “Human-in-the-Loop” systems for the first 30 days.

- Scale and Refine: Move to full production once accuracy hits 95%+.

llm news updates

How Do Technical Authority and Ethics Shape LLM News Updates?

As agents gain more autonomy, the conversation around AI News and Updates is pivoting toward safety and data ethics. You cannot deploy an AI Assistant with access to your financial records without ironclad security protocols. You can check an article on this topic by clicking here.

The industry is responding with “Zero Trust” enclaves for AI, ensuring that your data is never used to train the provider’s next model. Tracking LLM News Updates on regulatory changes is now a full-time job for compliance officers in the tech sector. Furthermore, maintaining EEAT (Experience, Expertise, Authoritativeness, and Trustworthiness) is essential for your brand’s reputation. Ethical AI usage means being transparent about when a human is involved versus an agent. By prioritizing these ethical standards, you build long-term trust and stay ahead of the evolving regulatory landscape in tech-heavy states like California. Comprehensive LLM News Updates often highlight the latest breakthroughs in AI alignment and safety protocols, which are vital for Business Owners exploring AI tools. You must ensure your AI Assistant operates within these ethical boundaries to avoid legal liabilities as the Digital Labor Market matures.

What Is the Ultimate Tooling & Resource Checklist for LLM News Updates?

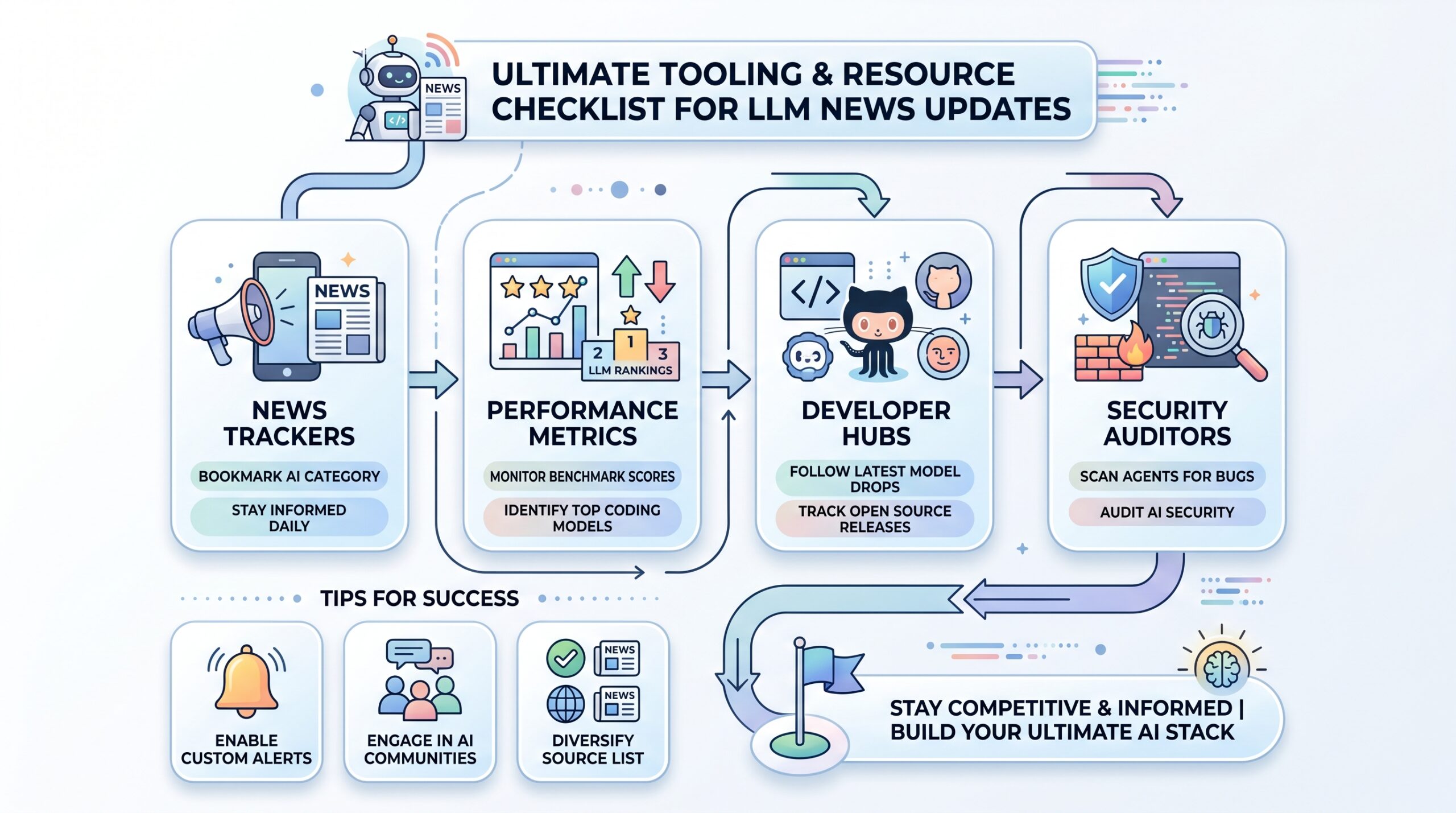

To stay competitive, you need a “stack” of resources that keeps you informed of Daily AI News Source changes and model shifts. Whether you are a student or a manager, these tools are your eyes and ears in the fast-moving AI sector.

Having a reliable checklist prevents “Info-Overload” and keeps your focus on what actually drives growth. Many professional traders now use LLM News Updates to make split-second decisions on tech stocks. The most essential resource in 2026 is a live LLM Leaderboard like Chatbot Arena, which provides objective data on model performance across technical domains. For developers, keeping a close eye on Hugging Face and GitHub is mandatory to catch “Zero-Day” model drops that could give you a pricing advantage. Additionally, you should integrate “AI Security Auditors” into your workflow to scan for prompt-injection vulnerabilities. By curating a list of high-signal sources, such as the Artificial Intelligence Category on USATopGuestPostSite, you can filter out the marketing fluff and focus on technical breakthroughs. This disciplined approach to information gathering is what separates the AI leaders from the laggards in a year defined by rapid-fire innovation. Constant LLM News Updates from these sources will ensure you always have the most efficient AI-Powered Software in your arsenal.

- News Trackers: Bookmark the Artificial Intelligence Category for deep-dive analysis.

- Performance Metrics: Use LiveCodeBench and Chatbot Arena to see the Best LLM for Coding in real-time.

- Developer Hubs: Follow GitHub and Hugging Face to catch the latest Open Source AI drops and AI Model Releases.

- Security Auditors: Utilize tools like Cisco DefenseClaw to scan agents for vulnerabilities.

llm news updates

What Is the Global and Industry Impact of These LLM News Updates?

The rise of the Digital Labor Market is fundamentally altering how we view work and productivity across the globe. We are moving toward a future where “entry-level” roles are augmented by AI Coding Agents, allowing human workers to move into more strategic positions.

This isn’t just about saving time; it’s about expanding what is possible for a small team in Irving or a startup in Austin to achieve. Major LLM News Updates frequently report on how these efficiencies are boosting GDP in developed nations. The “why” behind this evolution is simple: efficiency is the new global currency. As AI Infrastructure becomes a structural force in economic expansion, those who embrace the Enterprise AI Operating System will lead their respective industries. We are moving away from a world of “tools” and into a world of “partners,” where your AI works alongside you to build the future. If you want to lead, your first step is mastering the art of filtering LLM News Updates for actionable insights. The broader impact of these LLM News Updates suggests that by 2030, the synergy between human creativity and AI execution will be the standard for all global business operations.

Frequently Asked Questions

What is the Best LLM for Coding today?

As of March 2026, GPT-5.4 Codex and Gemini 3.1 Pro lead proprietary rankings, while GLM-5 is the top-rated open-source option.

How often should I check for LLM News Updates?

You should follow a Daily AI News Source because the field moves fast enough that a new “S-Tier” model can drop overnight.

What are AI Coding Agents?

Unlike basic assistants, agents like Claude Code or Cursor can plan, write, test, and deploy entire features autonomously based on LLM News Updates.

Is Open Source AI safe for enterprise use?

Yes, provided you use models with permissive licenses like Apache 2.0 (e.g., GPT-OSS-120B) and host them on secure AI Infrastructure.

Can an AI Assistant manage my entire business?

Not yet, but according to the latest LLM News Updates, they can handle up to 60-80% of repetitive administrative and technical workflows when properly integrated.

Here’s a cleaner, more engaging rewrite that still invites contributors but feels more natural and less repetitive, while improving flow and authority:

Join the AI Revolution: Share Your Expertise Today

The AI space is evolving fast, and fresh perspectives matter more than ever. If you are an AI developer, a tech-driven business owner, or someone deeply involved in digital growth, this is your opportunity to contribute and be heard.

By sharing your insights, you can reach a high-intent audience actively searching for updates on agentic AI platforms, AI-powered software, and the future of the digital labor market. Whether you want to break down technical topics, explore real-world applications, or analyze trends shaping modern AI infrastructure, your expertise can make a real impact.

We believe the future of AI is built through shared knowledge and practical insights. Contributing to our platform not only helps others stay informed but also strengthens your authority, expands your reach, and connects you with professionals who are actively shaping the next phase of AI innovation.

Contact Us:

Official Website: https://usatopguestpostsite.org